How Austin Heaton Creates Content Strategies

Learn how Austin Heaton creates content strategies for AI search. Discover his proven AEO frameworks for B2B SaaS, FinTech, and AI startups to drive pipeline.

Most content strategies still start in the wrong place. They begin with blog calendars, keyword volume, and traffic targets, then hope revenue shows up later. That playbook made sense when search was mostly a list of links. It breaks when buyers ask ChatGPT, Perplexity, Gemini, or Google’s AI surfaces for a recommendation and get a synthesized answer instead of ten blue links.

How Austin Heaton creates content strategies is different because the system doesn’t treat content as publishing output. It treats content as authority infrastructure. The goal isn’t to rank a page and celebrate impressions. The goal is to become the brand an answer engine can understand, trust, and cite when a buyer asks a decisive question.

That sounds like SEO with a fresh label. It isn’t. The shift is structural. AI systems don’t just match phrases. They assemble answers from entities, corroboration, context, and clean retrieval. If your strategy still revolves around top-of-funnel blog production without a strong authority layer underneath, you’re building surface area without building trust.

Your Content Strategy Is Already Obsolete

The most popular content advice is now the least useful. Publish more blogs. Target long-tail keywords. Build traffic first, then worry about commercial pages later. That sequence leaves many B2B brands visible to marketers but invisible to buyers using AI-assisted search.

The underlying problem isn’t that blogging stopped working entirely. It’s that blog-first strategy assumes search begins with discovery and ends with a click. Increasingly, discovery happens inside an answer. Your brand gets compressed into whether an AI model can retrieve you, interpret you, and decide you’re credible enough to mention.

That’s why the transition From SEO to AEO matters. Marketing teams aren’t just optimizing for rankings anymore. They’re optimizing to become the answer source behind the response.

What breaks first

Traditional programs fail in three places:

- Weak commercial coverage. Teams spend months publishing educational articles while core solution, service, and comparison pages remain thin or absent.

- Loose authority signals. The site says what the company does, but the web doesn’t reinforce it clearly enough for answer engines.

- Bad retrieval formatting. Pages may be readable to humans but hard for AI systems to parse, summarize, and cite.

A lot of teams also underestimate freshness. If a revenue page hasn’t been maintained, it can lose citation potential even if it still ranks. Austin’s perspective on update cadence is captured well in the 3 month content freshness rule, which is one reason his system treats content operations as ongoing infrastructure rather than one-time production.

Most content teams are still publishing for rankings. Buyers are increasingly choosing from recommendations.

Austin Heaton’s work matters here because he built a system around that shift. Instead of leading with traffic, he leads with revenue-bearing visibility in environments where AI decides which sources get surfaced at the moment of intent.

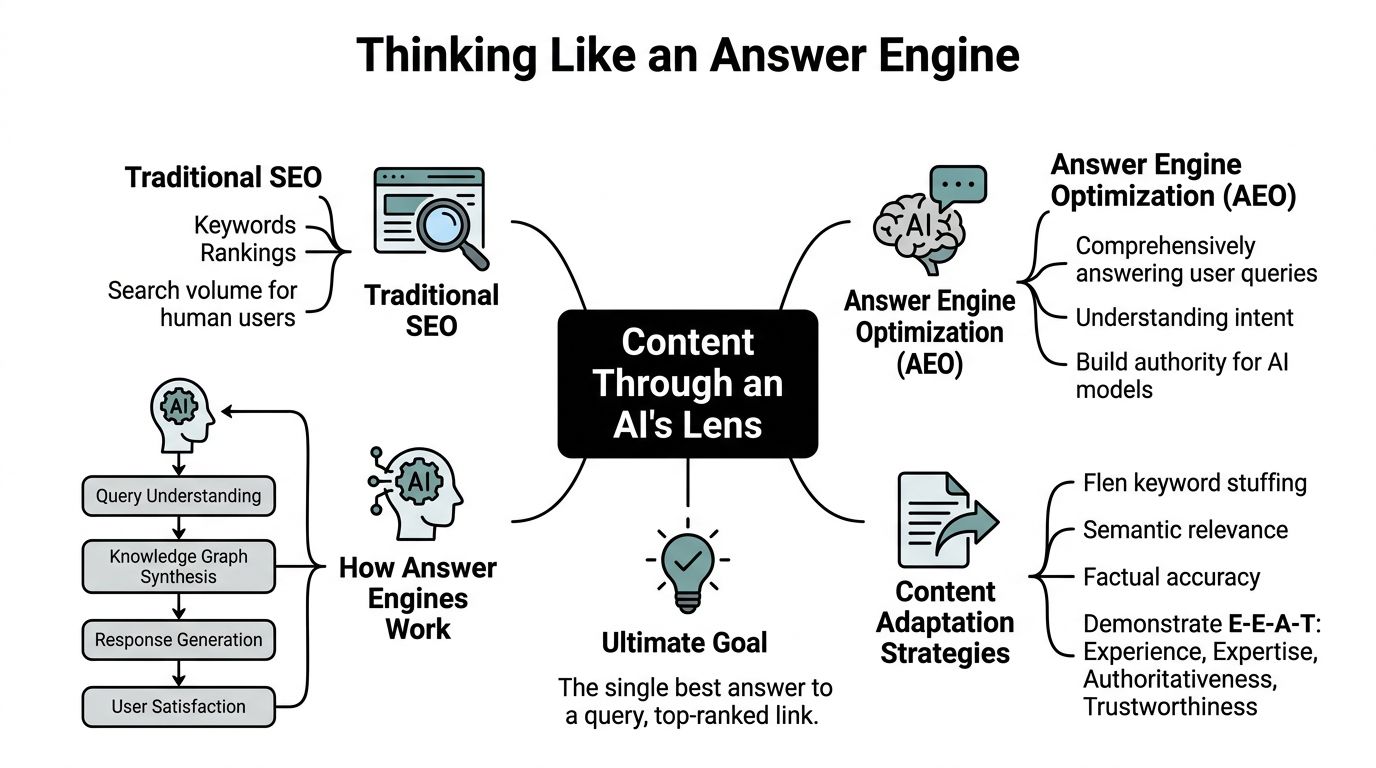

Thinking Like an Answer Engine

Traffic-first SEO trained teams to chase rankings. Answer engines reward source selection. That sounds like a small shift. It changes the entire content strategy.

An AI assistant does not evaluate your site the way a legacy search engine crawler does. It has to interpret the question, identify the entities involved, pull a usable answer from available sources, and decide which claims are reliable enough to include. Pages that win in that environment are easy to parse, easy to verify, and tightly aligned to buyer intent.

What the assistant looks for

In practice, answer engines evaluate several signals at once:

| Signal | What it means in practice |

|---|---|

| Clear entity definition | The brand, product, people, and category relationships are explicit |

| Structured information | Schema and page architecture make facts easier to retrieve |

| External validation | Third-party mentions and citations support the site’s claims |

| Concise answer blocks | Important questions are answered directly, not buried in fluff |

| Context depth | The page explains enough to support trust, not just extraction |

This is why old keyword planning breaks down. The useful question is not which phrase a page should target. The useful question is which commercial question your company deserves to answer better than anyone else.

That reframes the job. Content briefs change. Internal links change. Schema decisions change. PR changes too, because off-site confirmation affects whether a model trusts your on-site claims.

Entities beat isolated keywords

Keywords still help with retrieval. They no longer define the strategy.

Answer engines build confidence through entities and relationships. If you sell compliance software, the model needs a coherent picture of your category, product type, use cases, buyer segments, implementation reality, and third-party references. If those signals conflict, or only exist in fragments, the page is harder to cite even if it ranks well in traditional search.

For a useful outside perspective, Algomizer’s guide on how to rank in ChatGPT is worth reading because it reflects the same operational reality. Citation visibility comes from clarity, authority, and retrievability more than from old density tactics.

Practical rule: If a page cannot answer a buyer’s question in a few direct sentences before expanding into detail, it is less likely to be cited.

Austin Heaton’s methodology reflects that standard. The system is built to create an authority network around revenue-bearing topics, not a pile of disconnected articles that happen to attract visits.

Retrieval changes how content gets structured

Our analysis of B2B SaaS sites shows a common failure pattern. High-value information sits below vague introductions, brand messaging, and generic subheads, so the answer is technically present but operationally hard to extract.

Answer engines respond better when pages follow a clearer structure:

- Direct answers first to likely buying queries

- Supporting context second so the answer has enough depth to be trusted

- Evidence cues such as proof points, examples, and corroborating references

- Consistent labeling across the site so entities are easier to resolve

Site structure affects citation potential as much as copy quality. Austin’s guide on how to structure your website content so ChatGPT and Perplexity actually cite it shows the practical side of that work. If a model has to work too hard to identify the answer, it will usually cite a cleaner source.

The Four Pillars of the AEO Authority Framework

Austin Heaton’s strategy is easiest to understand as a system with four connected pillars. Many teams only invest in one of them, usually content production. That creates activity, not authority. AI visibility is more demanding because the model has to trust the answer, not just find the page.

The framework distributes effort across content, entity authority, technical infrastructure, and monitoring. Austin Heaton allocates resources through a four-layer model of 40 to 50% content, 20 to 25% entity authority, 15 to 20% technical infrastructure, and 10 to 15% monitoring, a structure described in this PPCMate profile. That same source says the model has delivered average 25% traffic growth within 90 days.

Pillar one focuses on revenue-first content architecture

This is the foundation. The site needs pages that map directly to commercial intent, category understanding, product use cases, alternatives, implementation concerns, and trust questions.

A common error among content teams is sequencing. They publish educational content first because it feels easier to scale. Austin flips that. He starts with the pages that support pipeline. That gives the site a commercial center of gravity before broader content expands the footprint.

What this pillar does:

- Captures high-intent demand instead of waiting for blog readers to self-qualify

- Gives answer engines strong source pages for recommendation-style queries

- Improves internal linking logic because supporting content points into real revenue assets

Pillar two defines the entity clearly

A lot of websites talk about expertise without encoding it. That creates ambiguity. Answer engines need cleaner signals than “we help modern teams move faster.”

Entity work solves that problem. It defines what the company is, what products or services it offers, who the experts are, what category it belongs to, and how those elements relate to each other.

This usually means tightening schema, standardizing language across important pages, aligning brand descriptions, and reducing contradictions between owned and third-party mentions. Austin’s thinking on that architecture is reflected in his entity authority framework.

If your site says one thing, your LinkedIn says another, your author pages are thin, and third-party mentions are inconsistent, the model has to guess. You don’t want AI guessing about your authority.

Pillar three earns strategic citation and authority signals

Not all backlinks matter equally in AI search. A random link might help classic SEO at the margin. It won’t necessarily help a model decide you are a trustworthy source.

This pillar is about third-party validation in the right contexts. Mentions in relevant publications, authority articles, category discussions, and credible external references matter because they corroborate what your site claims. Co-citation is often the hidden layer here. If your brand repeatedly appears near the entities, problems, and category terms you want to be associated with, answer engines get a stronger confidence signal.

A practical implication is that PR and link building can’t operate as separate channels anymore. They need to support entity definition.

Pillar four measures AI-native performance

Most reporting dashboards still overweight traffic, average rank, and generic visibility graphs. Those metrics haven’t disappeared, but they’ve become incomplete.

The better question is whether your authority system is producing:

- AI platform referrals

- Citation frequency in answer outputs

- Commercial page engagement from high-intent sessions

- Conversions tied to AI-assisted discovery

- Topic-level ownership in answer environments

This last pillar matters because AEO work can look deceptively quiet at first. Traffic may not spike in a dramatic way. But referral quality, assisted conversions, and citation presence can improve before standard SEO tools catch up.

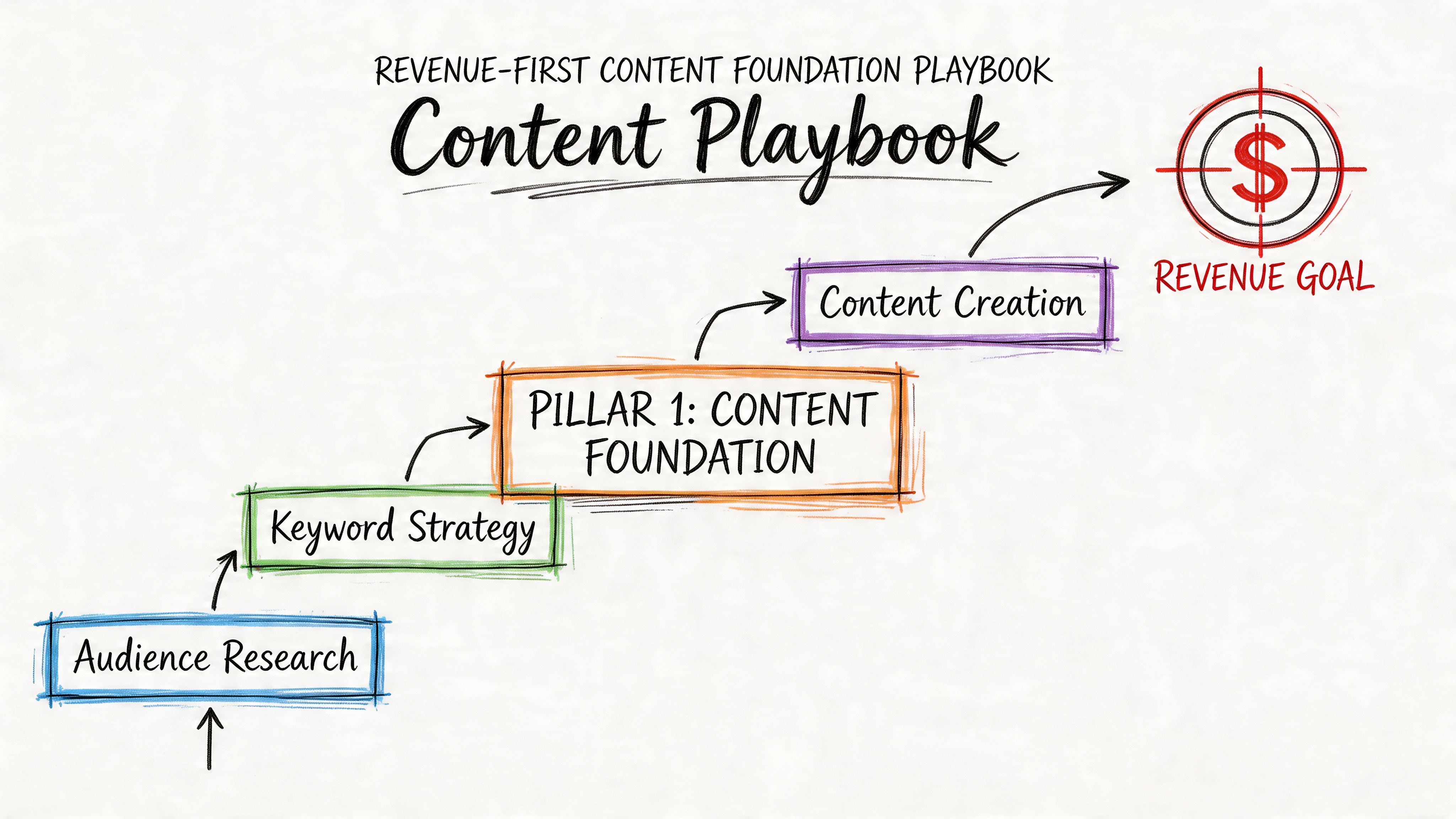

Playbook Building Your Revenue-First Content Foundation

Traffic-first content plans feel productive. They also leave revenue on the table.

The part of Austin Heaton’s strategy that matters most is the sequencing. He starts with pages that can win business, shape entity understanding, and give answer engines something commercially useful to cite. Blog content comes later, once the site has a real buying-path architecture.

That discipline is rare. Many B2B content programs publish thought leadership, glossaries, and trend pieces while their solution pages still read like vague brand copy. In an AI search environment, that is a structural mistake. If ChatGPT, Perplexity, or Google’s AI features evaluate your site and find weak commercial pages, they have very little reason to surface you for high-intent queries.

Austin’s five-layer content hierarchy fixes that problem by putting revenue infrastructure first. The logic is simple. Build the pages tied to pipeline before you scale publishing around awareness and education.

A B2B SaaS example

Take a SaaS company selling workflow automation for finance teams. A standard SEO playbook often starts with top-of-funnel topics such as “how to improve finance operations” or “month-end close best practices.” Those topics can attract visits, but they do little for revenue if the site still lacks credible pages for:

- finance workflow automation software

- workflow automation for accounts payable

- alternatives and comparison queries

- implementation and onboarding questions

- pricing and packaging concerns

- compliance and security questions tied to purchase risk

Austin’s system builds those pages first. The goal is not just to rank a URL. The goal is to give answer engines and buyers a clear, defensible source for evaluation-stage questions.

A page in that stack usually includes:

- A direct category definition near the top

- Short answer blocks for common buying questions

- Use-case sections aligned to buyer jobs

- Social proof elements placed where skepticism appears

- Clear CTA paths matched to page intent

- Internal links to supporting proof and adjacent commercial pages

The five layers work because they stack intent

The hierarchy works because each layer increases commercial clarity instead of scattering effort across disconnected assets.

Layer one covers core revenue pages

Solution, service, and category pages target purchase intent. These are often the best candidates for citation when someone asks an answer engine for a vendor, provider, or category fit.Layer two expands commercial depth

Pricing, implementation, alternatives, comparisons, and feature pages reduce buying friction. They answer the questions that stall deals.Layer three adds supporting authority content

Educational content starts pulling its weight here because it reinforces the commercial core and helps connect the brand to the right problems and use cases.Layer four strengthens discoverability and retrieval

Schema, internal linking, and topic clustering help models interpret the site correctly and connect related claims across pages.Layer five supports the system with external signals

Once the foundation is in place, outside validation can amplify it instead of compensating for weak page architecture.

Austin explains the sequencing in more detail in his article on content hierarchy for B2B companies that starts with bottom-funnel pages instead of blog posts.

A blog post can earn attention. A strong solution page can convert demand and become a citation source.

What these pages need to look like

A revenue-first page has to do two jobs at once. It must make the offer easy for AI systems to interpret, and it must make the buying decision easier for a human evaluator.

That changes how the page is written. Long scene-setting intros hurt performance. So does clever messaging that never states what the product is, who it serves, and why it is different. Buyers want direct answers. Answer engines do too.

Use this checklist when rebuilding core pages:

| Page element | What works | What doesn’t |

|---|---|---|

| Opening section | States what the product or service is and who it’s for | Generic brand messaging |

| Question handling | Answers buying questions directly | Forces users to hunt through paragraphs |

| Proof placement | Adds proof near claims and objections | Keeps all trust signals on one testimonials page |

| Internal linking | Connects to alternatives, pricing, and implementation pages | Sends every click to the blog |

| CTA logic | Matches stage of intent | Uses one generic demo CTA everywhere |

Here’s a useful visual breakdown of the kind of page system this requires:

There is a trade-off. Editorial teams usually prefer broad-topic publishing because it creates visible output fast. Revenue-first architecture is less glamorous. It asks the team to spend early cycles on category pages, comparison pages, implementation pages, and sales-enablement content.

That trade-off is worth making. Once authority builds, a site with commercial depth can convert AI-assisted discovery into pipeline. A site built on awareness content alone usually cannot.

Playbook Engineering Authority and External Validation

A revenue-first content system breaks if the market cannot verify your claims. Answer engines compare your site against the broader reference graph. If your category, expertise, and product narrative show up inconsistently across the web, you become harder to cite and easier to ignore.

That is why Austin pairs content strategy with entity engineering and citation engineering. One defines exactly what the company is. The other gives outside systems enough corroboration to trust it.

A FinTech example

Consider a FinTech company selling risk analytics software to lenders. Its homepage says it improves underwriting. Product pages mention compliance, decisioning, and portfolio monitoring. The founder describes the company one way on podcasts, while trade coverage labels it as fraud prevention or lending intelligence.

That kind of inconsistency weakens answer confidence.

The fix starts with schema and terminology alignment. The company needs one clear category definition, one consistent description of the product, and repeated language around the problems it solves. Author pages, product pages, about pages, and supporting articles should reinforce the same entity map. If the language shifts from page to page, AI systems have to guess what the business does.

Then the operating model has to support that clarity. Austin’s process combines technical SEO, AEO, and digital PR with recurring audits for schema coverage, publication discipline, and off-site authority building. The point is not activity volume. The point is making the brand easier to retrieve, verify, and cite.

What authority engineering looks like in practice

In execution, this usually breaks into three workstreams:

Entity definition on owned properties

Standardize page templates, schema, author attribution, and category language so every core asset describes the business the same way.Third-party corroboration

Earn mentions, links, and expert commentary on sites your buyers and the AI systems they use already trust.Association building

Place the brand in discussions tied to the category terms, buying questions, and comparison language you want answer engines to connect with your company.

Weak link building shows up fast. A placement on an irrelevant site may help a vanity dashboard. It rarely improves recommendation eligibility. Citation quality depends on contextual fit, topical proximity, and whether the source reinforces the same commercial narrative as your owned content.

That is also why digital PR now plays a larger role in AEO than in traditional SEO. Austin’s article on digital PR for AI search citations explains how placements can strengthen retrieval and citation likelihood, not just referral traffic. For a supporting perspective on external trust signals, Mastering AI Content and Google EEAT is also worth reviewing.

Why high-authority placements work differently in AEO

High-credibility publications matter because they act as validation nodes. They help answer engines confirm that your brand belongs in a specific professional conversation. That is different from chasing links for raw authority scores.

For the FinTech company, the right placements would sit in lender operations, compliance, fraud risk, and analytics publications. The content should clarify the company’s expertise and category position. It does not need to read like promotion. It needs to make the brand legible to both buyers and machine retrieval systems.

The web is the review layer for your claims. If credible third-party sources do not reinforce your expertise, your site has to do too much persuasion on its own.

The trade-offs most teams miss

Entity work is hard to celebrate internally because it produces less visible output than publishing new pages. Yet tightening schema, cleaning up author attribution, and standardizing category language often improves the performance of every commercial page you publish afterward.

External validation also requires restraint. The right mention on a trusted, relevant site can do more for answer-engine confidence than a batch of generic placements. Volume is easier to buy. Narrative alignment is harder, and more valuable.

For teams that need senior-led execution across content strategy, entity authority, and digital PR, Austin Heaton offers that combination as a consulting service.

Measuring What Matters in the AI Search Era

The old reporting stack hides too much. Organic traffic can go up while commercial visibility stays weak. Rankings can look stable while answer-engine citations disappear. Blog sessions can inflate dashboards while pipeline quality declines.

That’s why AI-era measurement needs a different scorecard. You still watch organic search performance, but you stop treating it as the primary proof of success.

What to track instead

A useful AEO scorecard includes four categories.

AI referral traffic

Track visits coming from platforms such as ChatGPT, Perplexity, Gemini, and AI-assisted search surfaces where available in analytics.Citation presence

Monitor whether your brand, executives, product pages, or supporting articles appear in answer outputs for strategic prompts.Commercial engagement

Watch what AI-referred users do on solution, pricing, alternatives, and demo-intent pages.Conversion and pipeline influence

Look for form fills, booked meetings, qualified leads, and sales conversations tied to AI-assisted sessions or self-reported discovery paths.

Often, many teams get stuck. They’re trying to prove AEO using legacy SEO KPIs alone. That misses the point. AEO is not just a visibility program. It’s a recommendation eligibility program.

Why vanity metrics get dangerous

Traffic is an easy number to present because everyone understands it. It’s also dangerous because it can hide strategy drift.

If a team grows informational traffic while losing visibility on pages that should influence vendor selection, the report can look healthy and the pipeline can weaken at the same time. That disconnect is getting more common as answer engines satisfy informational intent without sending as many clicks.

A stronger discipline is to review content by decision-stage role:

- Which pages are being surfaced for category questions?

- Which pages support alternatives and vendor comparison intent?

- Which citations are landing on content that can move a deal forward?

- Which AI referrals produce meaningful actions?

The best-performing AEO program often looks less impressive in a vanity dashboard and more impressive in a revenue review.

If you’re thinking about quality control on the content side, Humantext’s piece on Mastering AI Content and Google EEAT is a useful complement. The reason it matters here is simple. If content quality and trust signals are weak, measurement gets noisy because low-trust pages may attract attention without earning recommendation-level credibility.

Build the reporting loop around decisions

A practical reporting rhythm looks like this:

| Reporting layer | Question it answers |

|---|---|

| Weekly | Are key pages technically healthy and still citation-ready? |

| Monthly | Which prompts, pages, and entities are gaining or losing presence? |

| Quarterly | Is AI visibility influencing qualified pipeline and closed-won conversations? |

That last layer matters most. The goal isn’t to prove that AI sent “more traffic.” The goal is to prove that your authority system is influencing the moments when buyers shortlist vendors.

Building Your Durable Authority System

Publishing more content will not create a durable position in AI search. A system that answer engines can verify, trust, and cite will.

That is the difference in how Austin Heaton builds content strategy. The work starts with commercial intent, then hardens into an authority system that supports discovery, evaluation, and vendor selection. The objective is not broader visibility for its own sake. The objective is to become the source AI systems reach for when a buyer asks a high-stakes question.

For a CMO, that changes the operating question. Do not ask whether the team needs a larger content calendar. Ask whether the business has built enough entity clarity, proof, and external corroboration to earn recommendation-level trust.

That standard is harder to hit.

It also creates a better moat. Keyword rankings can move quickly. Authority is slower to build, but once the system is in place, it is much harder for a competitor to copy because they need more than content production. They need validated expertise, consistent market signals, and pages that support revenue conversations instead of chasing top-of-funnel volume.

Keywords still matter. Rankings still matter. They now sit inside a broader answer-engine model, where retrieval, source vetting, and citation confidence shape who gets mentioned. The teams that win in that environment do not treat content as a publishing function. They treat it as infrastructure for trust, sales velocity, and category authority.

If you need senior-level help building that system, Austin Heaton works with B2B SaaS, FinTech, AI, and growth-stage teams on AEO, SEO, entity strategy, digital PR, and revenue-first content planning built for Google and AI search platforms.