How Austin Heaton Tracks AEO Progress with AtomicAGI

Learn how Austin Heaton tracks AEO progress with AtomicAGI. A step-by-step guide to setting goals, configuring the tool, and measuring AI search ROI.

Many teams still measure AEO like SEO. They watch traffic, rankings, and maybe branded mentions, then wonder why stakeholders don’t see a revenue story. That breaks down fast in AI search, because the question isn’t whether a page ranked. It’s whether your brand got cited, recommended, clicked, and converted inside the moments that shape buyer decisions.

That’s why the most useful part of How Austin Heaton Tracks AEO Progress with AtomicAGI isn’t the prompt list or the dashboard layout on its own. It’s the measurement chain. Prompt visibility connects to AI engine citations. Citations connect to visits from specific engines. Those visits connect to conversions, funnel progression, and revenue attribution. Once that chain is in place, AEO stops looking experimental and starts looking like a measurable growth channel.

Beyond Pageviews Defining AEO Success Metrics

Pageviews are a weak success metric for AEO. CMOs do not fund citation reports. They fund pipeline.

Austin Heaton measures AEO the way an operator measures any revenue channel. Start with whether AI engines surface the brand in the prompts that matter. Then measure whether those mentions appear in recommendation-heavy answers, whether they drive qualified visits, and whether those visits turn into meetings, opportunities, and revenue. That sequence is what makes AEO defensible in a budget review.

What counts in AEO

The scoreboard changes in AI search. Rankings and sessions still matter, but they sit lower in the chain than they do in SEO. The earlier signals are usually stronger predictors of future return.

The metrics that carry weight are:

- Citation frequency. How often the brand appears in AI-generated answers for tracked prompts.

- Visibility share. How much of the prompt set your brand owns compared with direct competitors.

- Answer placement. A top citation or explicit recommendation usually drives more consideration than a passing mention lower in the response.

- Mention quality. “Recommended for” has more commercial value than “also known for.”

- Engine-level performance. ChatGPT, Perplexity, Gemini, Claude, and Copilot produce different traffic patterns and different conversion behavior.

- Pipeline contribution. Form fills, demo requests, influenced opportunities, and closed revenue tied back to AI-assisted discovery.

That framework matters because AEO rarely produces value in one clean step. A prospect may first see your brand in ChatGPT, revisit through branded search, then convert after a direct visit. Teams that only report last-click AI traffic miss part of the return. Teams that only report citations cannot prove commercial impact.

Why CMOs need attribution, not just visibility

Visibility is useful. Attribution gets budget approved.

I have seen plenty of teams bring screenshots of AI mentions into a leadership meeting and call it momentum. That works once. The next question is always the same: which prompts influenced pipeline, which engines drove the highest-converting traffic, and what should get more budget next month?

That is why AEO has to live inside the same reporting logic used for paid search, organic search, and outbound influence. If your team already relies on multi-touch attribution models, AEO should feed that same system instead of sitting in a separate visibility report. Austin applies that logic in his guide to measuring AEO results for B2B companies, where the focus is tying prompt-level visibility to conversion paths and revenue, not stopping at generic reach.

Intent matters just as much as volume. A brand can show up constantly for broad educational prompts and still generate little pipeline if it disappears from comparison, vendor selection, and implementation queries. Good AEO measurement separates awareness visibility from buyer-stage visibility so teams do not confuse exposure with commercial traction.

What distorts AEO reporting

A few reporting mistakes show up again and again:

- Combining AI traffic with organic traffic and losing source-level attribution.

- Counting all mentions the same way even though a direct recommendation is stronger than a neutral reference.

- Prioritizing visit volume over visit quality when AI traffic often converts on a different curve than traditional search traffic.

- Treating every tracked prompt as equal instead of weighting prompts by buying intent.

- Reporting activity without CRM outcomes so leadership sees motion, not business impact.

The practical standard is simple. Measure whether your brand appears in the right prompts, inside the right engines, in a recommendation context that can influence a buying decision. Then connect those exposures to visits, conversions, pipeline, and revenue. That is the bar Austin Heaton uses with AtomicAGI, and it is the difference between an interesting AEO program and one a CMO can scale.

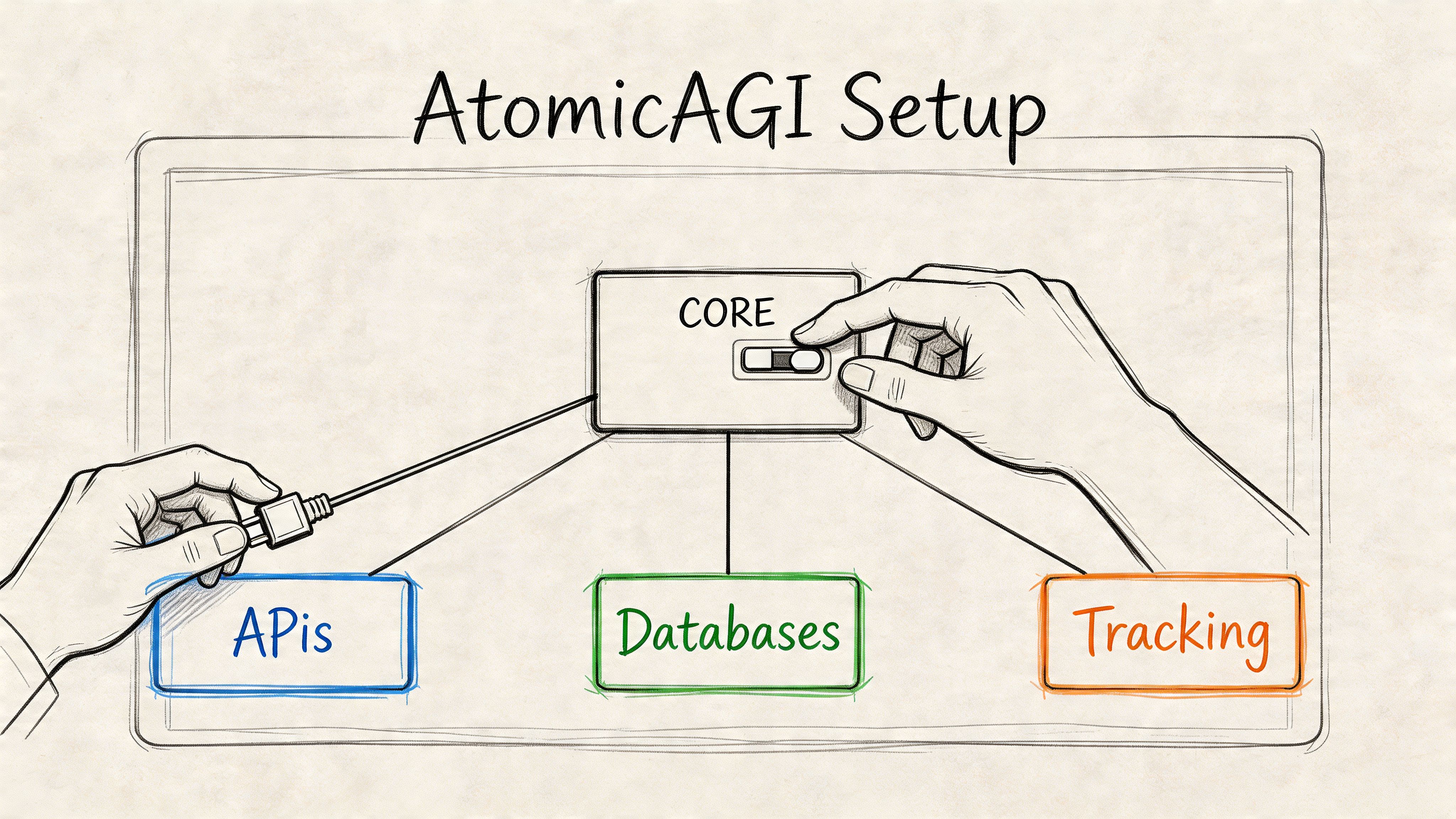

Configuring AtomicAGI for Performance Tracking

AtomicAGI only proves ROI if the setup is built for attribution from day one. Austin Heaton does not configure it as a visibility toy. He configures it as a measurement layer that ties prompt presence, AI referrals, conversion events, and CRM outcomes into one reporting system leadership can trust.

The first decision is channel architecture. AI traffic needs its own source definitions inside analytics, not a catch-all referral bucket. ChatGPT, Perplexity, Gemini, Claude, and Copilot should each have distinct source views so the team can compare traffic quality, conversion rate, and pipeline contribution by engine. If those visits are blended into organic or referral, the reporting loses the one thing a CMO needs: proof that AI search is producing commercial value.

Start with source isolation

A clean setup usually starts inside one business unit, one product line, or one market. Mixed workspaces create reporting noise fast. Enterprise, mid-market, self-serve, and regional offers often rank for different prompt sets and convert on different sales cycles, so Austin keeps those environments separate whenever possible.

From there, the configuration follows a strict order:

- Create a project around a defined revenue motion. Use one product category, buyer type, or region per workspace.

- Map AI referrers into their own channel group. That preserves source-level attribution for each engine.

- Connect conversion events to CRM stages. Demo requests, qualified leads, opportunities, and closed revenue should be visible in the same reporting path.

- Add a competitor set that matches real buying decisions. Track the brands that appear in the same recommendation and comparison prompts.

- Load the prompt library you want to measure every week. Treat this like a forecasting model, not a loose keyword list.

That order matters. If prompt tracking is live before attribution and event mapping are clean, teams get screenshots of visibility but no defensible revenue story.

Analytics hygiene also matters more here than many teams expect. Bad event naming, duplicate form fires, and inconsistent UTMs will distort AEO reporting long before AtomicAGI becomes the problem. Teams cleaning up that layer can use Trackingplan’s guide on how to install Trackingplan as a practical reference.

Define prompts by buyer intent

Prompt selection is where weak AEO programs usually break. B2B marketing teams often load broad informational queries because they are easy to brainstorm and easy to report on. That creates inflated visibility numbers with little connection to pipeline.

Austin structures prompt sets around buying stage and commercial intent:

- Awareness prompts for category education and problem definition

- Consideration prompts for alternatives, comparisons, workflows, and use cases

- Decision prompts for vendor evaluation, implementation questions, pricing discussions, and shortlist validation

The value of that structure is simple. It shows whether the brand is visible where buyers learn, where they compare, and where they choose. A company can dominate awareness prompts and still lose the market if it disappears from decision-stage answers.

Each prompt also needs metadata. Austin typically tags prompts by intent stage, topic cluster, product line, geography, and whether the query is branded, non-branded, or competitor-adjacent. That makes segmentation possible inside the dashboard later. It also keeps the team from treating every prompt as equally valuable.

A prompt belongs in the core reporting set only if someone on the revenue team would care whether the brand appears in that conversation.

For regulated and high-complexity categories, the setup also has to fit the rest of the measurement stack. Schema, analytics, attribution, and compliance constraints all affect what can be tracked and how confidently it can be reported. Austin explains that stack design in his guide to the FinTech SEO tech stack for regulated companies.

After the tracking foundation is in place, a walkthrough helps teams understand what they’re looking at inside the tool:

Configure for downstream proof

The last part of setup is reporting logic. Austin builds AtomicAGI around the questions stakeholders ask once budget scrutiny starts.

- Which AI engines send qualified traffic, not just visits?

- Which prompt groups influence decision-stage sessions?

- Which AI-assisted sessions become leads, opportunities, and revenue?

- Which content or entity changes improved commercial visibility over time?

If those fields, events, and joins are not defined up front, the team ends up rebuilding the story in spreadsheets at quarter end. That is slow, hard to defend, and usually too late to influence budget decisions.

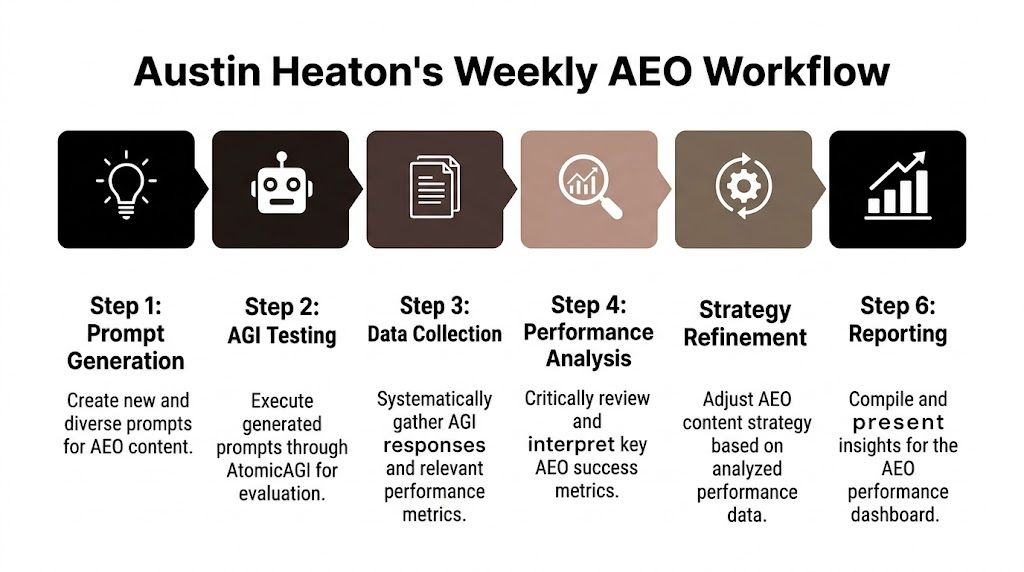

The Weekly AEO Tracking Workflow

Weekly AEO tracking is where budget conversations are won or lost. Austin Heaton uses AtomicAGI to tie prompt-level visibility to sessions, conversions, and pipeline evidence before anyone asks whether the program is working.

The cadence is simple. The discipline is not.

Each week, Austin runs a fixed set of buyer prompts across the major AI engines inside AtomicAGI, then compares the output against prior weeks, competitor mentions, and downstream conversion behavior. The goal is not to admire visibility charts. The goal is to answer a harder question. Did this week’s AEO work increase the brand’s share of commercial AI answers in a way that can lead to revenue?

The weekly operating cadence

The prompt set stays stable enough to show trend lines and flexible enough to reflect new product angles, competitor moves, and changes in how buyers phrase evaluation questions. That trade-off matters. If the prompts change every week, the team loses comparability. If they never change, the reporting misses shifts in the market.

A weekly review tracks five things:

- Presence across priority prompts and engines

- Citation position within the answer, including whether the brand appears first, later, or only as a passing mention

- Framing of the mention, such as recommended vendor, comparison option, category example, or generic reference

- Competitive share inside the same prompt cluster

- Week-over-week movement after publishing, schema updates, internal linking changes, or entity refinement

That last point is where the process gets useful. AtomicAGI is not just capturing whether the brand appeared. It is logging enough detail to test cause and effect. If a page template changed on Tuesday and recommendation-style mentions improve by Friday, the team has a working hypothesis worth validating.

What gets documented every week

Austin’s team logs every prompt the same way so the review holds up under scrutiny from marketing leadership and revenue operations. Consistency matters more than volume.

For each prompt, the worksheet answers four questions:

- Did the brand appear at all? If not, the issue is usually relevance, entity strength, or content fit.

- How did the brand appear relative to competitors? A low-priority mention below direct rivals rarely influences pipeline.

- What role did the brand play in the answer? Recommended brands and cited solutions carry more commercial weight than brands buried in a long list.

- Did that visibility line up with real site behavior later? If an AI mention produces no qualified visits or conversion activity, the team treats it as weak signal, not proof of success.

One missed citation does not trigger a strategy rewrite. Repeated losses in the same prompt cluster do.

Field note: The most expensive AEO mistake is reporting AI visibility without separating informational mentions from buying-stage recommendations.

AtomicAGI helps here because it keeps prompt observations tied to traffic and conversion data in one system. This not only makes weekly reviews faster but also makes them defensible.

How the team turns signal into action

The weekly meeting is not a status call. It is a diagnosis session.

When a prompt cluster slips, Austin looks for the specific reason before recommending any fix. Sometimes the issue is content structure. Sometimes the page answers the topic but misses the actual buying question. In other cases, the page is strong, but the brand’s entity signals are weak enough that the model cites a better-known competitor.

The review usually checks:

- Answer fit, whether the page directly resolves the buyer’s question

- Entity clarity, including brand, product, and category relationships

- Schema coverage, where structured data can improve interpretability

- Comparative positioning, whether the copy gives the model language it can reuse in summaries

- Authority support, including corroborating mentions outside the site

That is why Austin uses a defined audit process instead of ad hoc edits. His step-by-step AEO audit process for B2B SaaS companies gives the team a repeatable way to find root causes instead of guessing.

Why weekly beats monthly

Monthly reporting is too slow for a channel that changes this fast. AI engines adjust outputs, competitors publish new pages, and prompt phrasing shifts with product launches and category news.

Weekly tracking gives enough data to spot real movement without confusing noise for trend. It also gives CMOs something more valuable than a visibility update. It gives them an operating system for deciding where to invest next, which experiments to stop, and which wins are strong enough to take into the boardroom.

Building Your AEO Performance Dashboard

Raw AEO data doesn’t persuade executives. A well-built dashboard does. The difference is narrative. AtomicAGI should show how AI visibility progresses from exposure to traffic to conversion quality, with enough detail for the operator and enough clarity for the C-suite.

The most effective dashboard starts with channel-level proof. AtomicAGI can tie bot activity spikes, such as a 2 to 3X increase in GPTBot visits, to citation lifts and has documented 560% growth in AI clicks in 60 days. It also supports engine-level attribution showing that AI-driven traffic can convert at over 15%, compared with a 5% to 8% organic average, according to AtomicAGI’s platform documentation.

The executive layer

At the top of the dashboard, keep it simple. The first row should answer three questions fast:

- Are we getting more visible in AI answers?

- Are AI engines sending qualified traffic?

- Is that traffic converting?

That top row usually includes visibility share, AI-referred sessions, and conversions by engine. If leadership has to scroll to find the business outcome, the dashboard is misbuilt.

Below that, show prompt-stage segmentation. Awareness is useful, but decision-stage prompts deserve their own block because they’re closest to pipeline. This is also where a CMO can see whether gains are broad but shallow, or concentrated where buying intent is stronger.

Essential AEO Dashboard Metrics in AtomicAGI

| Metric | What It Measures | Target Benchmark |

|---|---|---|

| Visibility share | Share of tracked prompt landscape where the brand appears | More than 30% visibility share in decision-stage prompts |

| Average position | Whether the brand appears as a primary or lower citation | Top-3 average position |

| Decision prompt coverage | Presence in high-intent buyer prompts | Top-2 position in 80% of decision prompts |

| AI traffic conversion rate | How well AI-referred visitors convert compared with other channels | Over 15% conversion rate from AI traffic |

| Engine-level referrals | Which platforms actually send users to the site | Tracked by engine, then prioritized by quality |

| Bot activity trend | Whether crawl and retrieval activity align with visibility gains | 2 to 3X increase in AI bot visits in 60 days |

| Geographic visibility | Whether visibility holds across target markets | 20%+ visibility share globally across North America and Europe markets |

The operator layer

The second half of the dashboard should be more diagnostic. For this purpose, practitioners need filters for engine, prompt cluster, intent stage, and competitor set.

A good build usually includes:

- Trend lines by engine so ChatGPT, Perplexity, Gemini, and Claude don’t get blended together

- Prompt-level views to isolate where visibility improved or dropped

- Competitor comparison widgets to show share shifts rather than standalone numbers

- Funnel views that connect AI traffic to conversions and later-stage outcomes

The dashboard should tell two stories at once. Executives need direction and ROI. Operators need causes and next actions.

This is also the place to compare engine quality, not just engine volume. Some engines send more visits. Others send fewer but more qualified ones. That’s why a report on which LLM brings in the best leads is useful as a companion view. It reframes the conversation from “Which engine is biggest?” to “Which engine is most valuable for this business?”

What weak dashboards get wrong

Weak AEO dashboards usually fail in one of three ways:

- They over-index on screenshots of mentions.

- They blend all AI traffic together and hide engine quality.

- They stop at session counts and never connect to conversion behavior.

A strong AtomicAGI dashboard does the opposite. It gives leadership one clear line from AI presence to business impact.

Interpreting Results and Iterating Your Strategy

The hard part isn’t collecting AEO data. It’s deciding what the pattern means. The same visibility drop can come from a model update, a competitor’s content refresh, a schema problem, or a simple mismatch between the prompt and the page being cited.

That’s why interpretation needs a diagnostic sequence rather than a generic optimization checklist.

Read the pattern before changing the page

Start with scope. If visibility drops across multiple engines at once, look first at structural issues such as content changes, schema errors, crawlability, or weakened entity signals. If the drop appears in one engine but not the others, the cause is often engine-specific behavior or a shift in how that model summarizes the category.

If one competitor suddenly gains ground across a prompt cluster, inspect what changed in their page structure and positioning. The winning page often answers the exact buyer question more directly, even if it isn’t longer or more authoritative in the traditional SEO sense.

Don’t optimize the page that lost until you know why it lost. Otherwise teams rewrite useful content and preserve the real problem.

Match the fix to the failure

Different signals point to different actions.

- High visibility, weak traffic usually means the brand is being mentioned without a compelling enough role in the answer. The fix is often sharper positioning, stronger comparison framing, or clearer call-to-action alignment on the cited page.

- Traffic without conversions points to a landing experience issue. The answer may be qualifying the visitor well, but the page doesn’t continue the decision journey.

- Strong bot activity without citation lifts often suggests that the site is getting crawled but the content structure still isn’t easy for models to extract and trust.

- Decision-stage weakness with awareness-stage strength means the authority is broad but not commercially persuasive. Comparison content, implementation detail, and proof assets usually matter more here.

Prioritize by commercial impact

Not every AEO issue deserves immediate action. The best teams triage based on buyer intent and likely revenue effect. Decision-stage prompt losses usually come first. Broad informational gaps can wait unless they directly support the commercial path.

A practical prioritization stack looks like this:

- Fix prompt clusters tied to active pipeline

- Repair structural issues that affect multiple pages

- Strengthen entity and authority signals where the brand is absent

- Expand supporting content only after core commercial gaps are addressed

Iteration also needs patience. Some changes surface quickly in AI responses. Others need repeated re-testing after models refresh. The point isn’t to react to every fluctuation. It’s to make fewer, better changes and verify whether those changes shifted the outcomes that matter.

From Data to Pipeline A Mini Case Study

A common AEO problem looks like this. A SaaS company has a strong comparison page and decent search traffic, but it keeps losing a high-intent buyer prompt in AI engines. The marketing team assumes the issue is authority. The AtomicAGI workflow shows something more specific.

Weekly prompt testing shows the brand is present, but only as a secondary mention. Competitors are framed as direct recommendations. Engine-level traffic reporting also shows that one AI source is sending qualified visitors, but the landing page isn’t matching the decision-stage intent that brought them there.

The fix isn’t a full content rewrite. It’s a focused restructuring. The team rewrites the page to answer the buyer question more directly, tightens comparison language, improves entity clarity, and updates supporting schema. Then they re-test the same prompts over the next cycle and watch whether the answer context changes from mention to recommendation.

That’s the part often overlooked. The dashboard doesn’t just show improvement. It shows whether the improvement happened in the exact prompt set that influences pipeline. Austin has published additional examples of those outcomes in his roundup of AEO results.

The larger lesson is practical. AEO ROI becomes defensible when the team can point to one chain of evidence: prompt visibility changed, engine referrals changed, on-site behavior changed, and the business got more qualified outcomes from the channel.

If you need a senior operator to build this measurement layer, Austin Heaton works with B2B teams that want AI visibility tied to pipeline, not just reported as another awareness metric.