Q1 AEO Visibility Report: A B2B Guide for 2026

Build your Q1 AEO Visibility Report. This guide provides a B2B strategy to earn AI citations and drive pipeline from ChatGPT, Perplexity, and AI Overviews.

Most Q1 search reports are still measuring the wrong game.

They celebrate rankings, non-brand clicks, and traffic trends as if buyers still move through a ten-blue-links journey. They don't. In B2B, a growing share of discovery now happens inside ChatGPT, Perplexity, Gemini, and Google AI Overviews. If your brand isn't cited, summarized, or recommended there, your report can look healthy while your pipeline subtly weakens.

The fix isn't another SEO dashboard. It's a Q1 AEO Visibility Report built around citation coverage, entity clarity, answer quality, and influenced revenue. Enterprise teams have started moving this way. Smaller companies need a simpler operating model, not another theory deck.

Why Your Q1 SEO Report is Now Obsolete

Traditional SEO reporting assumes one thing that no longer holds up. If you rank, you win.

That assumption breaks when Google answers the query before the click happens. A projection from Airank Lab's AEO market report says Google AI Overview penetration reaches 40–60% of US informational searches in 2026, and pages appearing in those Overviews see a 15–30% reduction in traditional organic click-through rates. The same source also notes a documented example where organic CTR fell 61% from 1.76% to 0.61% when AI Overviews appeared.

That means your top-three ranking can still lose commercial value. Visibility without citation is now a weak outcome.

Rankings don't show influence anymore

A conventional Q1 report usually answers these questions:

- Where do we rank?

- How much organic traffic did we get?

- Which pages gained or lost sessions?

- What keywords improved?

Those are still useful. They just aren't sufficient.

A modern buyer asks an AI engine for a shortlist, compares vendors through follow-up prompts, then clicks one or two brands at most. The brand that gets mentioned early often shapes the shortlist before the visit. That's why teams in regulated and research-heavy categories need a reporting layer that captures influence before the click.

For financial firms, this is especially important because buyers often want synthesized comparisons, market context, and trust cues. If your team works in banking, a useful example of how firms operationalize data-driven decision support is Financial Services Business Intelligence. The lesson isn't about buying software. It's that executive teams already understand business intelligence for finance, but many still haven't applied the same rigor to AI visibility.

Your Q1 report needs two visibility systems

SEO still matters. AEO now sits beside it.

The cleanest way to think about this is a dual system. One layer earns discoverability in Google. The other earns inclusion in AI-generated answers. If your reporting doesn't separate those motions, you'll keep misreading performance. A page can lose clicks and still become more influential because AI systems cite it. Another page can rank well and contribute nothing to buyer consideration because no model trusts it enough to mention it.

Stop treating click loss as the only signal of decline. In AI search, some lost clicks mean Google used your content to answer the question while keeping the user on-platform.

Teams that need a practical model for this split should study the Dual Visibility Framework for Google and AI search. The core idea is simple. Track what ranks, but report what gets cited.

What belongs in a replacement report

A serious Q1 AEO Visibility Report should include:

| Legacy SEO view | Useful AEO replacement |

|---|---|

| Keyword rank | Citation presence by priority query |

| Organic CTR | AI mention frequency and context |

| Traffic by landing page | AI-influenced visits and assisted conversions |

| Backlink count | Authority signals tied to citation wins |

| Average position | Share of voice across answer engines |

If your leadership team still sees rankings as the headline KPI, change that now. Rankings are an input. Recommendation visibility is the outcome.

Mapping Your Audience to AI Query Intent

Many organizations still do keyword research as if buyers type fragments into a search bar. They don't. They ask questions with context, constraints, and follow-ups.

That's why the first job in a Q1 AEO Visibility Report isn't content production. It's query modeling. You need a documented set of prompts that mirrors how your buyers ask for help inside AI systems.

A benchmark from Conductor's AEO and GEO report says AI referral traffic constitutes 1.08% of all website traffic, growing at ~1% monthly, and 27% of AI visitors become sales-qualified compared with 2-5% from traditional organic search. The traffic share still looks small. The intent quality doesn't.

Start with pains, not keywords

Build your prompt set from buyer problems, not from your existing SEO list.

For a B2B SaaS company, the wrong seed term is "customer onboarding software." The better starting point is the actual buying tension:

- Our implementation team is overloaded

- Sales keeps promising fast onboarding

- We need fewer support escalations after launch

- We need a tool that works with our current stack

Those pains become AI-style prompts such as:

- Which onboarding platforms are best for a mid-market SaaS company with a lean implementation team?

- What should I compare when evaluating onboarding software for enterprise accounts?

- Is it better to buy onboarding software or build workflows inside our existing tools?

- What onboarding tools integrate well with a modern SaaS stack and support customer success teams?

That's the shift. You stop targeting labels and start targeting evaluation language.

If you want examples grounded in software buying behavior, the top AI search use cases for SaaS is a useful reference point because it reflects how prospects move from problem discovery to vendor shortlisting.

Use a prompt model for each buying committee

One keyword map isn't enough in B2B. The CFO, RevOps lead, security reviewer, and product owner all ask different questions.

Use a simple structure like this:

| Buyer role | Primary concern | Query style | Desired asset |

|---|---|---|---|

| CFO | Cost control and ROI | Comparison and risk prompts | Buyer guide, TCO page |

| Head of IT | Integration and governance | Technical fit prompts | Documentation, schema-rich product pages |

| Demand Gen lead | Speed and reporting | Workflow and use-case prompts | Templates, implementation pages |

| Procurement | Vendor confidence | Trust and proof prompts | Security, compliance, expert pages |

A FinTech example makes this obvious. A CFO won't ask, "best treasury SaaS." They are more likely to ask for safe comparisons, implementation tradeoffs, reporting quality, and vendor trust. A technical evaluator inside an AI startup will ask whether a tool supports governance, APIs, model flexibility, deployment options, and documentation depth.

Build your first Q1 prompt library

Don't overcomplicate the first pass. Build a working library your team can test weekly.

Use this sequence:

List revenue categories

Start with your highest-value product lines, use cases, or industries.Write real buying questions

Pull language from sales calls, demos, Gong snippets, support tickets, and customer interviews.Expand into multi-turn prompts

Add follow-ups such as "compare," "for mid-market," "with compliance requirements," or "for a lean team."Tag each prompt by intent

Use buckets like problem discovery, shortlist creation, comparison, objection handling, and implementation.Map each prompt to an existing page or content gap

If no asset serves the query, put it in the content queue.

Practical rule: If a prompt sounds like something only a marketer would write, delete it. AI visibility comes from matching buyer language, not publishing jargon.

Two examples that usually expose weak research

FinTech platform targeting CFOs

Bad prompt set:

- best fintech software

- treasury management platform

- finance automation tools

Better prompt set:

- what should a CFO look for when evaluating treasury software for a multi-entity business?

- which treasury platforms are easiest to implement without creating reporting risk?

- compare treasury systems for finance teams that need stronger cash visibility and controls

AI startup targeting technical leaders

Bad prompt set:

- AI platform

- LLM ops tool

- model observability software

Better prompt set:

- which AI platforms give engineering teams the most control over model governance?

- what should a CTO compare when selecting infrastructure for production LLM applications?

- best tools for monitoring AI application performance across multiple models

The teams that win AEO don't publish more. They publish against a sharper prompt map.

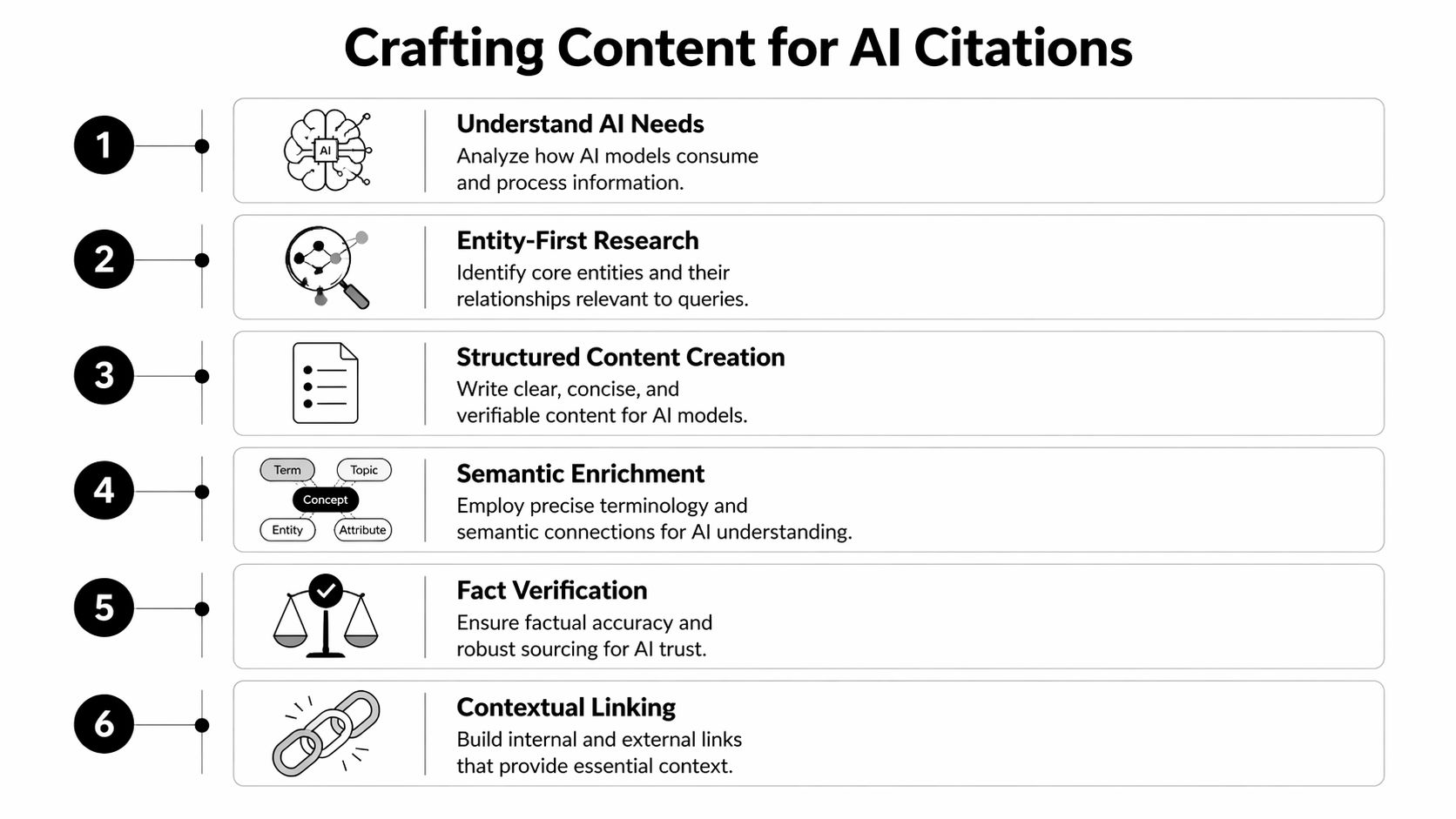

Building Content That Earns AI Citations

Most B2B content fails in AI search for one simple reason. It reads like marketing copy, not source material.

LLMs don't reward clever positioning statements. They reward clear answers, verifiable claims, strong entity signals, and structured information. If your page opens with fluffy brand language and buries the answer, you've made citation harder than it needs to be.

A benchmark summarized by Monday.com's answer engine optimization guide notes that content older than 90 days without updates risks citation drops of 20-40%, and that mature brands tend to hold 15-35% Share of Voice in their category. The practical takeaway is blunt. Fresh, authoritative content earns repeated inclusion. Stale pages fade.

Write answer-first blocks, not vague intros

Your first paragraph should answer the query directly. Not "explore the changing environment." Not "businesses today face many challenges." Answer the question.

Compare this.

Before

Cloud governance is an important consideration for modern enterprises seeking to improve security, compliance, and operational efficiency across their digital environments. Organizations must address a range of challenges while selecting tools that align with strategic priorities.

After

Cloud governance software helps enterprises enforce policies, monitor usage, and reduce compliance risk across cloud environments. Buyers should compare policy controls, audit readiness, role-based access, integration support, and reporting depth before shortlisting vendors.

The second version gives an AI system something extractable. The first gives it fog.

The content pattern that gets cited more often

Use this page structure on commercial and informational assets:

Direct answer near the top

Give a concise definition, recommendation set, or comparison frame immediately.Scannable lists and subheads

AI systems handle clearly segmented content better than long narrative blocks.Entity-rich language

Name the product category, user role, use case, process, and technical concepts plainly.Evidence and attribution

Quote your own experts only when they have a visible profile, credentials, or clear role on the site.Updated timestamps and revision discipline

Pages need routine refreshes, especially in fast-moving categories.

For legal-tech and compliance-heavy categories, content quality standards are even stricter because the reader expects precision. If you want a live example of how a niche topic can be framed around practical utility instead of generic hype, this piece on AI legal assistants is useful because it focuses on clear use cases rather than vague AI language.

Give writers citable instructions

Don't tell writers to "make it SEO-friendly." Give them production rules.

Use prompts like:

- Define the term in the first two sentences.

- Include one section that compares options by buyer need.

- Break complex topics into numbered criteria.

- Add a short summary under each H3.

- Use precise nouns over broad category language.

- Remove any paragraph that could apply to every company in the market.

The AEO content checklist for B2B pages is a solid benchmark for editorial review because it forces teams to check answer structure, authority cues, and technical readiness before publishing.

A simple editorial filter for Q1

Use this before any page goes live:

| Question | If the answer is no |

|---|---|

| Does the page answer the core query in the opening? | Rewrite the introduction |

| Can a model pull a clean list from it? | Add bullets, tables, or numbered criteria |

| Is the author or source clearly attributable? | Add expert profile or organizational context |

| Is the page current enough to trust? | Refresh examples, definitions, and page date |

| Does the page help a buyer decide? | Add comparison logic, objections, or next-step guidance |

The best AEO content doesn't sound robotic. It sounds decisive, specific, and easy to quote.

A lot of teams publish "thought leadership" that never becomes recommendation material. If the content can't support a buying decision, don't expect an AI engine to use it in one.

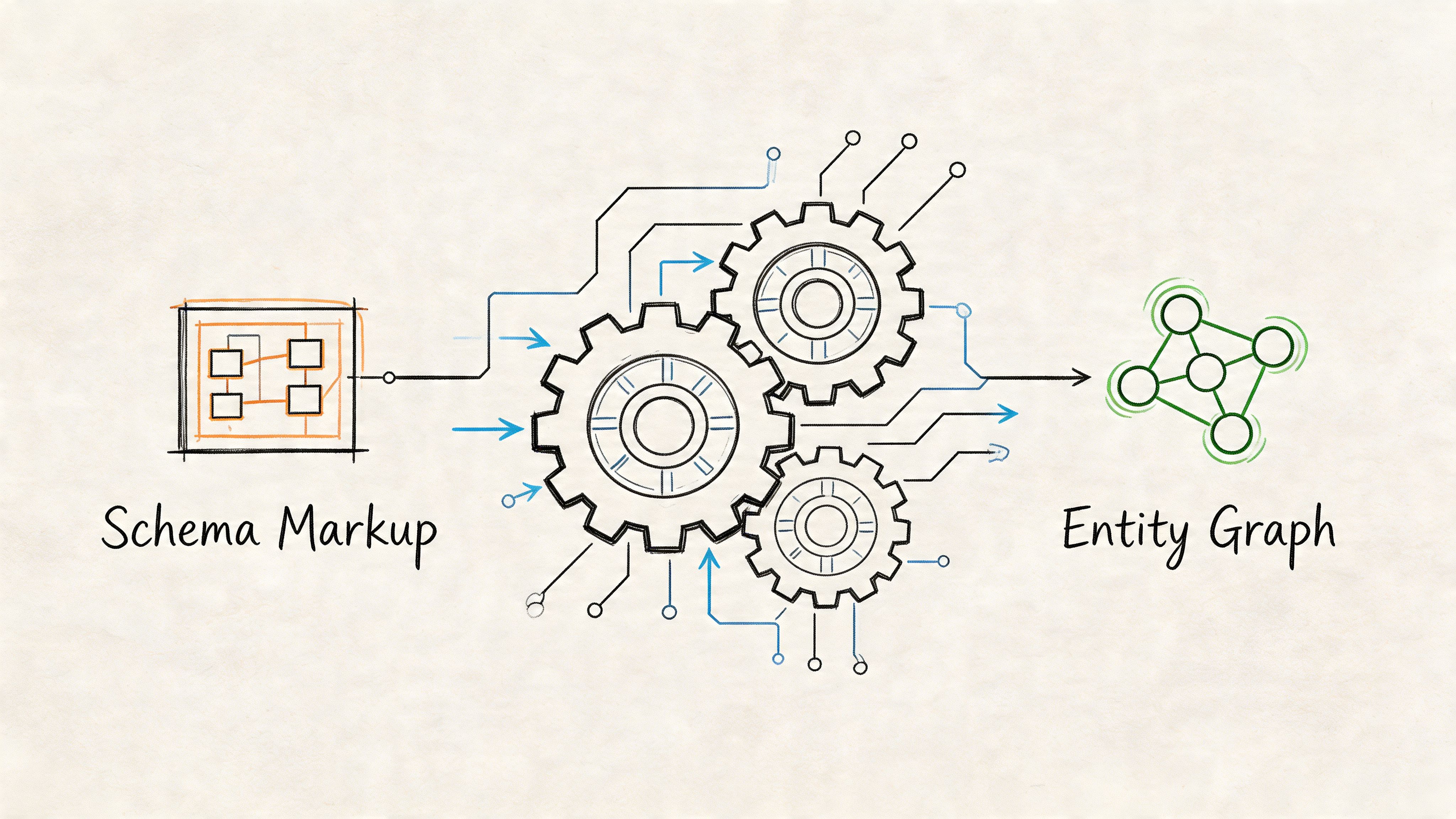

Implementing Technical and Entity SEO for AEO

Good content without entity clarity is fragile. AI systems need to know who you are, what your company does, which experts speak for it, and how pages relate to each other.

That is where most SMB teams fall behind. They publish articles, but they never build the machine-readable layer that ties the brand, the people, the product, and the topic together.

A warning from Signal Inc's AEO guide matters here. It argues that global one-size-fits-all AEO strategies are ineffective, and that brands strong in US Google results can disappear in EU AI responses because of entity schema gaps. That's not a content issue. That's a technical authority issue.

The three schema types most B2B sites need first

You don't need a giant structured data rollout to start. You need the right base layer.

Organization schema

Use it to define the company as a distinct entity. Include brand name, website, social profiles, logo, and a concise description of what the business does.

Why it matters: this creates a stable reference point that AI systems can connect to product pages, articles, and author profiles.

Person schema

Use it for founders, executives, product leaders, or subject matter experts who appear in content. Tie each profile back to the organization and to authored content.

Why it matters: AI systems weigh expertise more confidently when the expert exists as a clear entity on the site rather than as a byline with no context.

FAQ or article-level structured markup

Use it where the page contains question-and-answer blocks or a well-defined article. Don't force FAQ schema onto pages that aren't written that way.

Why it matters: this helps models and search systems identify extractable answers and topical focus.

If your team needs a stronger foundation for this work, this guide on building entity authority for SaaS is the right implementation reference because it connects schema, brand entities, and topical trust.

A practical JSON-LD starter example

For a fictional B2B SaaS company, the structure would look something like this in practice:

- An Organization object for the company

- A Person object for the head of product or subject matter expert

- An Article object on the page that references the author and publisher

- An FAQPage object only when the page includes real questions and direct answers

The point isn't to paste random schema from a generator. The point is to make sure your core entities connect consistently across templates.

If your company name, author profiles, and product pages aren't semantically connected, AI systems have to guess. Don't make them guess.

Regional and governance signals matter

Many brands build one global website and assume the models will sort out market nuance. They won't. Region-specific pages, localized trust cues, and clean entity associations help AI systems distinguish market relevance.

That includes details like:

- country or region context on industry pages

- market-specific compliance language where appropriate

- localized expert pages

- consistent naming conventions across site sections

- governance files and crawl guidance for AI-era use

A short explainer can help your internal team align on what's changing technically:

Teams also ask about LLMs.txt. My view is simple. Use it as a governance signal, not as a substitute for technical SEO. It can clarify preferred access and content handling, but it won't rescue weak schema, fragmented entities, or thin source pages.

Measuring and Amplifying AI Visibility

If your Q1 AEO Visibility Report doesn't connect visibility to commercial outcomes, it turns into another awareness deck.

The core metric I use first is Citation Rate. According to Discovered Labs' AEO measurement framework, calculate it as (Your brand citations / Total relevant queries tested) × 100. The same methodology notes that 27% of visitors from AI engines convert to sales-qualified leads, a 5.4x to 13.5x multiplier over typical organic search conversion rates.

That is why citation tracking matters. It isn't vanity. It's an early revenue signal.

How to calculate Citation Rate in a real Q1 report

Let's use a hypothetical FinTech company.

The team selects a defined query set covering product comparisons, implementation questions, reporting concerns, and vendor evaluation prompts. They test those prompts across ChatGPT, Perplexity, Google AI Overviews, and Bing Copilot on a weekly cadence. For each query, they record whether the brand appears, where it appears, and how it is framed.

The formula stays simple:

Citation Rate = Brand citations / Total relevant queries tested × 100

If the brand appears in a small share of tracked prompts at the start of Q1, that's your baseline. If the rate rises by the end of the quarter, you now have a leading indicator that your content and entity work are landing. Then you layer in UTM tracking, form field capture, and CRM attribution to see which AI-assisted visits move into pipeline.

The report should separate leading and lagging indicators

A strong executive dashboard uses both.

Leading indicators

- Citation Rate across each answer engine

- Share of voice by topic cluster

- Mention context and positioning

- Freshness status of citation-winning pages

- Presence across high-intent prompts

Lagging indicators

- AI-referred sessions

- Sales-qualified leads from AI traffic

- Pipeline influenced by AI-originating visits

- Closed revenue tied to AI-assisted journeys

- Assisted conversions from cited pages

The AEO metrics and tracking stack for B2B companies is a useful blueprint if your team needs to operationalize this in GA4, your CRM, and your reporting layer.

What to record every week

Don't rely on one screenshot or one prompt. Use a repeatable worksheet.

| Field | What to capture |

|---|---|

| Query | Exact prompt tested |

| Engine | ChatGPT, Perplexity, Google AI Overviews, Bing Copilot |

| Brand cited | Yes or no |

| Mention order | Early mention or later mention |

| Context | Neutral, favorable, weak, or unclear |

| Landing asset | The page most likely supporting the citation |

| Commercial intent | Discovery, comparison, shortlist, or decision |

A common oversight by many teams involves cutting corners. They track yes or no citation presence and ignore framing. That misses the difference between being treated as a credible option and being mentioned as an afterthought.

Practical rule: A late mention in a weakly framed answer is not a win. Measure mention quality, not just mention existence.

Amplification is not just link building

Once you know which topics produce citations, push authority into those areas from outside your site.

That means:

- placing expert commentary in industry publications

- building contributor profiles for named subject matter experts

- earning mentions on relevant partner sites

- publishing comparison and buyer education assets that others reference

- tightening internal links from high-trust pages to target assets

This is also where digital PR and authority-building articles help. Not because AI systems count backlinks the old way, but because third-party references reinforce who the credible entities are in a topic.

A lot of SEO teams still obsess over ranking reports while doing almost nothing to shape off-site authority signals. That's backward. In AI search, recommendation quality improves when the broader web already describes your brand as a trusted source.

Your Q1 AEO Governance and Action Plan

Most AEO programs fail for boring reasons. Nobody owns the prompt set. Content ships without expert review. Technical changes sit in a backlog. Reporting lives in a spreadsheet no executive reads.

Fix governance, and the rest gets easier.

The right operating model for a Q1 AEO Visibility Report is lean. One owner drives the report. One content lead manages asset creation and refreshes. One technical lead owns schema and entity consistency. Revenue ops or demand gen handles attribution and form tracking. Subject matter experts review only the pages that shape buyer decisions.

Month one audit and map

Start with an audit that answers four questions:

- Where does the brand already appear across major answer engines?

- Which buyer prompts matter most by revenue category?

- Which existing pages are plausible citation candidates?

- Where are the technical gaps in entity clarity and schema coverage?

Then build the first prompt library, cluster it by intent, and assign each prompt to either an existing page or a content gap. This is also the month to align leadership on what the report will measure. If executives still ask for rankings first, reset expectations.

Month two content and tech sprint

This is production month. Refresh stale pages. Rewrite weak intros. Add answer-first structures, comparisons, FAQs where appropriate, and visible expert attribution. Ship the schema fixes that help AI systems connect your organization, people, and topics.

A lot of teams also benefit from looking outside marketing for language that buyers use. Customer success teams hear objections and evaluation criteria every week. That's why resources about AI for customer success can be surprisingly valuable for AEO planning. They surface the operational questions and friction points that often become strong AI prompts later in the journey.

Your best AEO insights usually come from sales calls, onboarding friction, and support conversations. Not from a keyword tool.

Month three measure and amplify

By the third month, you need a live reporting rhythm.

Run recurring prompt tests across answer engines. Review citation changes by topic cluster. Tie AI-referred visits to CRM stages where possible. Then amplify what works. If one comparison page starts earning mentions, support it with internal links, expert commentary, fresh updates, and off-site references.

Use a standing cadence like this:

| Cadence | Owner | Output |

|---|---|---|

| Weekly | Growth or SEO lead | Prompt test log and citation changes |

| Biweekly | Content and SME leads | Refresh queue and new asset approvals |

| Monthly | Technical lead | Schema and entity issue review |

| Monthly | RevOps or demand gen | AI traffic and pipeline attribution update |

| End of quarter | CMO or growth lead | Executive Q1 AEO Visibility Report |

What executives should see on one page

Keep the final report blunt.

Show:

- current citation coverage on priority prompts

- strongest and weakest topic clusters

- key pages driving AI visibility

- AI-referred lead quality

- pipeline influence from AI-originating or AI-assisted sessions

- next quarter's content and technical priorities

Don't bury the board or C-suite in screenshots from answer engines. Show movement, commercial relevance, and operational next steps.

A strong Q1 AEO Visibility Report doesn't try to prove that AI is important. That argument is already over. It proves whether your brand is becoming recommendable where buyers now ask their most important questions.

If you want help building a Q1 AEO Visibility Report that ties citation gains to pipeline, Austin Heaton helps B2B SaaS, FinTech, AI, and e-commerce teams turn AI visibility into measurable revenue outcomes.